And What Architecture Makes It Safe

Artificial intelligence is rapidly evolving from predictive tools into systems that can take actions on behalf of users. These systems are often described as agentic AI. Instead of generating recommendations, agentic models can initiate workflows, execute decisions, and interact with external systems autonomously. Across industries this capability is gaining momentum. Financial services, healthcare, insurance, and digital commerce platforms are all exploring how autonomous AI agents can automate complex operational tasks.

Yet many organizations encounter the same problem when they attempt to deploy these systems in regulated environments.

The models work.

The environment does not.

Agentic AI often performs well in isolated testing environments but becomes difficult to govern once connected to production systems that handle real transactions, customer data, and regulatory obligations. The issue is rarely the intelligence itself. The issue is whether the surrounding architecture can safely support autonomous behavior. (Discover why most AI projects fail after pilot.)

Why Are Regulated Environments Different for AI Systems?

Most digital platforms can tolerate some level of experimentation. If a recommendation engine produces a weak suggestion, the business impact is limited. Regulated systems operate under a different constraint. Financial services platforms, for example, process extremely sensitive workflows such as payments, credit decisions, fraud detection, identity verification, and transaction monitoring. These activities must comply with strict regulatory expectations including auditability, explainability, and traceability.

Recent industry analysis shows that more than 60 percent of financial institutions cite regulatory governance as the primary barrier to scaling AI systems beyond pilot deployments. Organizations are not hesitant about AI innovation. They are cautious about introducing autonomous behavior into environments where every decision must be explainable under regulatory scrutiny. Agentic AI introduces new complexity because it does not simply produce outputs. It takes actions.

And actions must always be accountable.

What Actually Breaks When Agentic AI Meets Production Systems?

Most organizations assume that deploying autonomous AI primarily requires improving model accuracy. In reality, the failures usually appear elsewhere. When agentic systems begin interacting with production infrastructure, three structural issues emerge.

First, data governance often becomes unclear. Autonomous systems rely on multiple data sources and decision inputs. If those sources lack consistent definitions or lineage tracking, the AI agent may produce actions that cannot be explained afterward.

Second, operational visibility becomes insufficient. Traditional monitoring tools are designed to track infrastructure metrics such as CPU usage or service uptime. They rarely capture how an AI system arrived at a decision or how that decision propagated through downstream systems.

Third, responsibility becomes ambiguous. Autonomous systems blur the boundary between application logic, platform infrastructure, and operational workflows. When an agent makes a decision that affects customer accounts or financial transactions, teams must be able to determine exactly which system initiated that behavior and why. Without this architectural clarity, organizations quickly revert to pilot environments rather than deploying AI into production. (Discover how data architecture impacts operational resilience.)

Why Does Traditional Monitoring Fail to Govern Agentic AI?

Many organizations believe their monitoring infrastructure already provides sufficient oversight. Monitoring confirms that systems are running. Observability explains how they behave. Traditional monitoring tools detect when infrastructure metrics cross predefined thresholds. They can alert teams when a service goes down or a workload exceeds expected capacity.

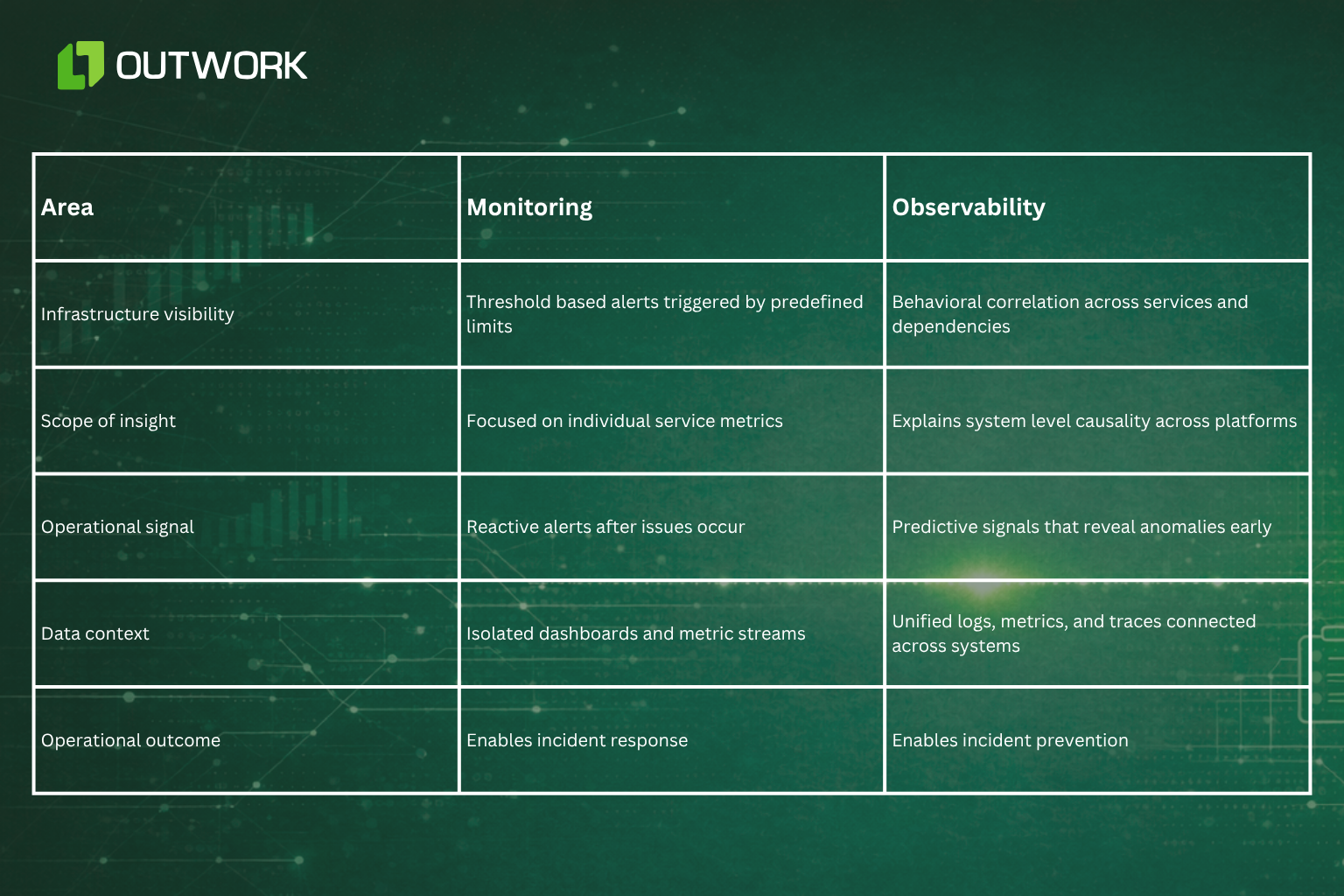

Autonomous AI systems require a different level of visibility. Teams must understand how decisions are formed, how they interact with downstream systems, and how unexpected behavior can be traced across the entire platform. The difference becomes clearer when comparing monitoring with observability.

Monitoring vs Observability: A Practical Comparison

Monitoring confirms that something crossed a limit. Observability explains why it happened and what it affects next.

For agentic AI systems operating inside regulated environments, that difference becomes critical.

What Architecture Allows Agentic AI to Operate Safely?

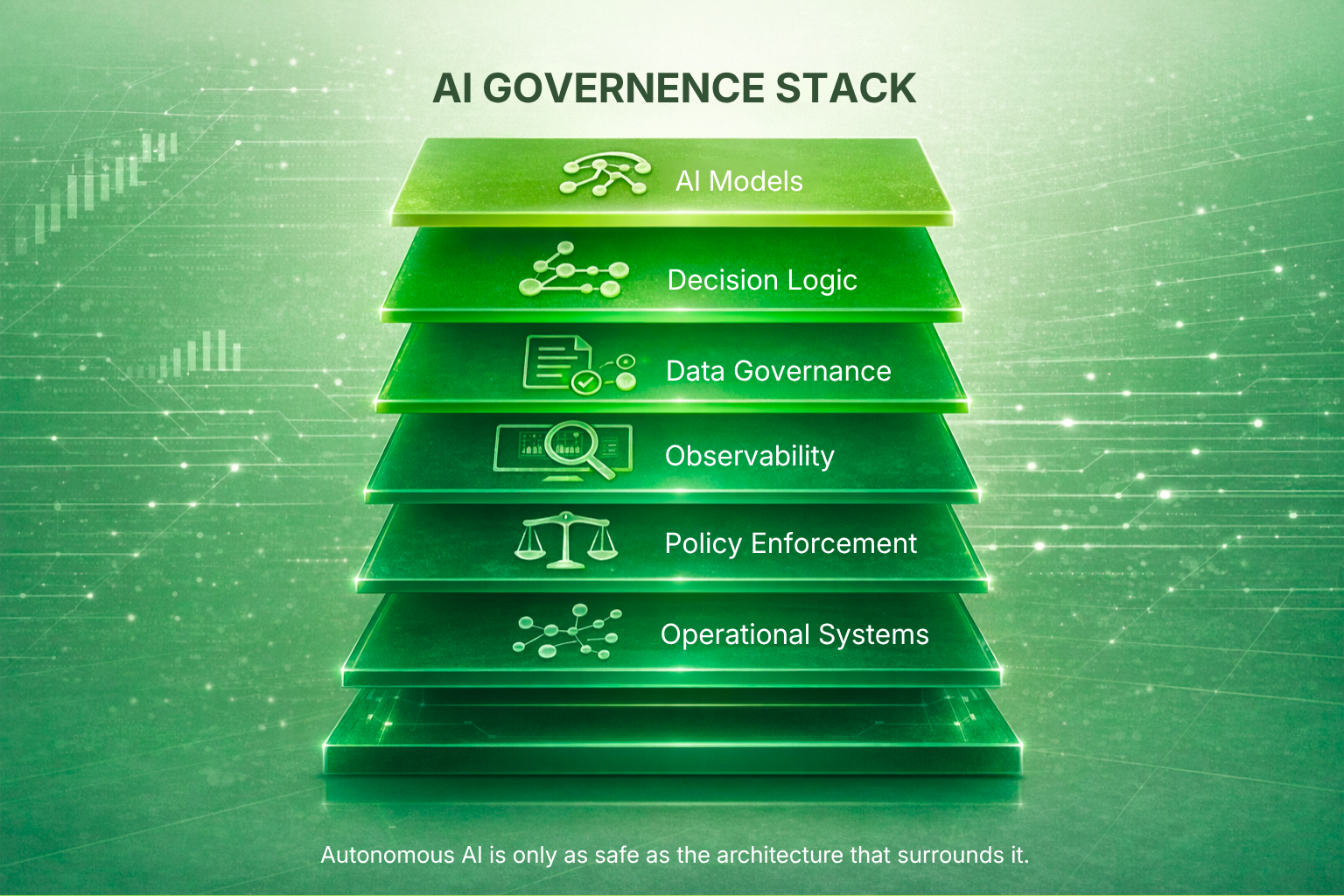

Organizations that successfully deploy autonomous systems rarely treat AI as an isolated feature. Instead they introduce architectural guardrails that make autonomous decision making observable and accountable. Production ready agentic AI environments typically include several structural capabilities.

Clear decision lineage allows every automated action to be traced back to its inputs and model context. Policy driven governance ensures that compliance rules are enforced automatically at the platform level rather than relying on manual review. (Explore why AI-native systems outperform AI-added approaches.)

Unified observability connects system telemetry with decision level insights so that engineers can understand how AI behavior affects operational workflows. Incremental rollout patterns such as canary deployments allow organizations to introduce AI capabilities gradually rather than exposing entire systems to autonomous behavior at once.

These architectural foundations allow AI to operate safely without sacrificing regulatory transparency.

What Market Signals Show the Urgency of AI Governance?

Industry research indicates that organizations are accelerating investment in autonomous AI capabilities while governance frameworks continue to lag behind.

Global AI spending is projected to exceed 500 billion dollars within the next few years as companies embed AI into core business processes. At the same time, regulatory authorities across financial markets are introducing new guidelines that require stronger model governance, transparency, and operational accountability.

Financial regulators in several regions have already issued guidance requiring institutions to demonstrate explainability and control over automated decision systems. These requirements extend beyond model development. They apply to the entire operational architecture surrounding AI systems.

Organizations that cannot demonstrate traceability, governance, and operational oversight risk slowing down AI adoption even when their models are technically capable. This is why architecture is becoming the decisive factor in scaling autonomous systems.

Operational Implication: What Leaders Should Decide Before Deploying Agentic AI

Executives evaluating autonomous AI initiatives should shift the conversation from model capability to system readiness. Before introducing agentic systems into production environments, teams should confirm several operational conditions. Data ownership must be clearly defined so that AI decisions can always be traced to authoritative sources.

Observability must capture the full lifecycle of AI driven actions including inputs, decision context, and downstream effects. Governance policies must be enforced automatically rather than relying on manual oversight. Deployment strategies should allow AI behavior to be introduced gradually through controlled rollout patterns.

These conditions transform AI from an experimental capability into an operational asset.

Why Architecture Determines the Future of Autonomous AI

Agentic AI represents one of the most powerful shifts in enterprise technology. Systems are moving from analysis to action. But autonomy without accountability creates risk.

Organizations that treat architecture as the foundation for AI adoption are discovering that autonomous systems can operate safely even inside highly regulated environments. Their platforms are designed to capture decision lineage, enforce governance policies, and provide real time operational visibility. Organizations that skip this architectural discipline often find themselves stuck in pilot programs. The difference is not intelligence.

The difference is the system that carries it.

Outwork POV

At Outwork, we approach agentic AI from an operational perspective rather than a model perspective. Our focus is on stabilizing the systems that autonomous intelligence will interact with. That includes strengthening data governance, embedding observability into production platforms, and implementing policy driven operational controls.

When architecture supports transparency and accountability, agentic AI becomes safe to operate in environments where reliability and compliance are essential.

And that is where autonomous systems begin delivering real value.