Why Most AI Projects Fail After Pilot — And What Production Systems Require Instead

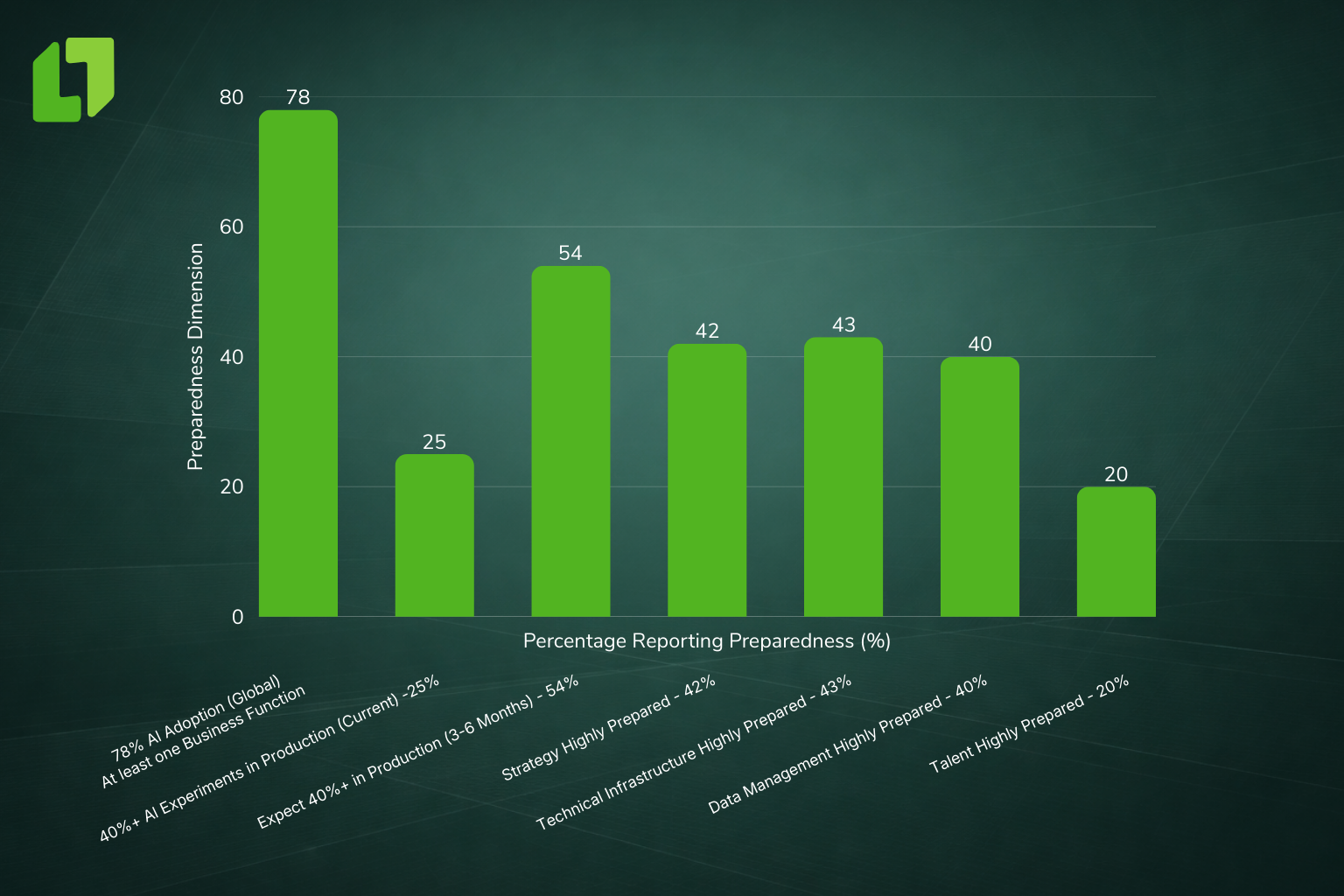

AI adoption isn’t the problem anymore. Teams can train models, run demos, and spin up cloud capacity. The problem is getting intelligence to live inside systems that actually run the business. Too many pilots succeed in controlled environments and then stall at the point of production, not because models are weak, but because the operating platform underneath them is.

The landscape most people assume

Over the last three years the market’s story about AI has shifted: the conversation moved from “Can we build it?” to “Can we run it?” Benchmarks show many organisations launch dozens of pilots, but only a minority reach sustained production and deliver measurable business value. That gap is why leadership still hears “AI is hard” even when the technical teams say “the models work.”

The real constraint: operational readiness, not models

Where most efforts break down is in the handoff between model and operations. Production systems are not a neutral environment. They carry history: quick integrations, “temporary” scripts, duplicated records, piecemeal monitoring, and informal ownership. When you add AI into that environment, it amplifies whatever is already there, and the result is friction, exceptions, and stalled rollouts. (Discover how data architecture shapes operational resilience.)

What “works”, but still blocks AI

Many platforms “work” in the sense that pages render, transactions complete, and dashboards update. But work in production at scale means more than “it runs.” It means predictable behavior under pressure, consistent definitions, traceable decisions, and low operational overhead. When those aren’t in place, AI output becomes ungovernable and teams revert to pilots.

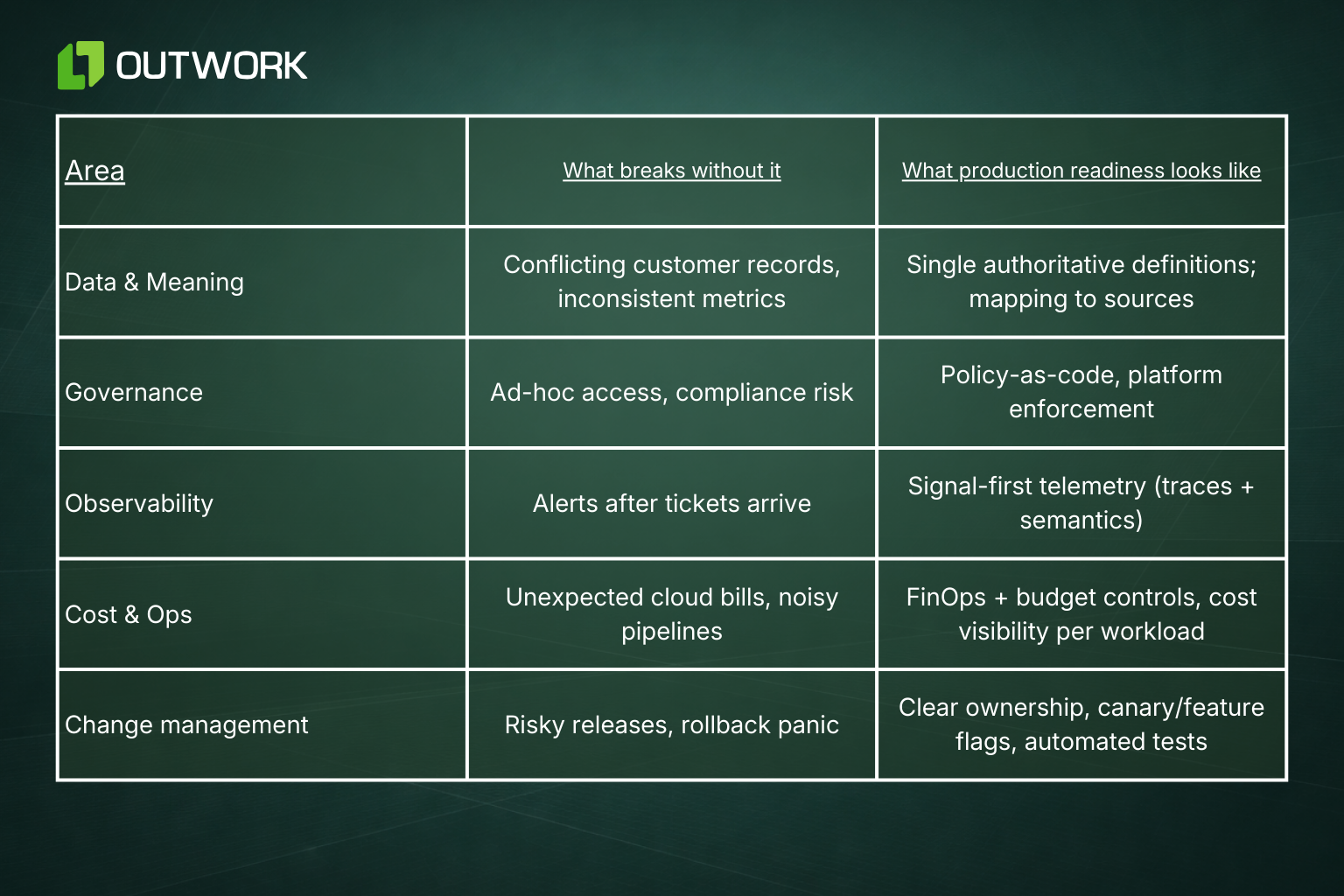

What production-ready systems actually require (short, practical list)

When you design for production-first AI, the checklist is operational and concrete:

- Clear data ownership and single sources of truth for key entities (customer, account, contract).

- Platform-level governance so policies (access, retention, classification) are applied automatically.

- Observability that connects data, model inputs, and downstream effects (not just service metrics).

- Cost clarity and FinOps controls so AI workloads don’t silently balloon cloud bills.

- Incremental modernization tactics that stabilize interfaces before automating them.

These are not “nice-to-haves.” They are the minimum conditions for any AI to move from pilot to durable operations.

Framework: How to think about AI production readiness

Operational implication: what executives must decide now

If you lead a product, platform, or operations function, this is the practical ask:

- Stop treating modernization as “phase 2.” Move minimal operational scaffolding ahead of model rollout: ownership, observability, and governance.

- Quantify the run-cost for AI pilots and add FinOps controls that make cost visible to product owners.

- Treat every AI rollout like a change to a regulated workflow: plan canaries, rollback, and explainability from day one.

- Require one measurable operational KPI for any AI use case to move from pilot to production (e.g., mean time to detect drift; cost per API call; or error rate under load).

These are the levers that turn AI from an experiment into dependable capability. (Explore why building fast can undermine system stability.)

The long view

AI production readiness is not a checklist you complete once. It is a discipline you institutionalise: platform patterns, policy-as-code, and a culture that values predictability as highly as innovation. Companies that adopt that posture get AI benefits at scale; those that don’t end up with a portfolio of pilots, visible on slide decks but invisible where value is created.

Outwork POV

Outwork’s approach is to modernize the system that will carry intelligence, not the intelligence on top. We stabilise ownership, embed observability, and build platform governance so AI becomes an outcome the business can rely on, not an expensive, recurrent experiment.