The Incident Rarely Starts as an Outage

Most production failures do not begin with a crash. They begin with a pattern. A small latency increase in one service. A retry rate that quietly climbs. A queue that drains slower than usual. An AI inference endpoint that becomes inconsistent under load. By the time traditional monitoring triggers an alert, users may already be affected.

In 2026, distributed architectures, AI workloads, multi cloud deployments, and API ecosystems have made system behavior more complex than ever. The question is no longer whether something is up or down. The question is whether teams understand why it behaves the way it does before customers notice. This is where observability separates mature operations from reactive ones.

What Is Observability vs Monitoring?

Monitoring tracks predefined metrics and raises alerts when thresholds are crossed. Observability enables teams to understand system behavior by correlating logs, metrics, traces, and contextual signals to identify root causes before user impact.

Monitoring answers: Is something broken?

Observability answers: Why is it behaving this way?

That difference is structural, not cosmetic.

Why Most Teams Believe Monitoring Is Enough

Most technology teams already have monitoring in place. Infrastructure dashboards show CPU usage, memory, network throughput, and uptime percentages. Alerts notify teams when services degrade beyond defined limits. On paper, coverage looks strong.

Yet recent reliability benchmarks show that many incidents are still detected by customers before internal alerts surface the issue. At the same time, engineering surveys consistently report high Mean Time to Resolution caused not by lack of alerting, but by slow root cause identification across distributed systems. Organizations assume that better thresholds and more alerts will fix this.

In practice, more alerts often increase noise rather than clarity.

Why Monitoring Alone Breaks Down in 2026 Architectures

Modern production environments now include:

- Microservices spanning hundreds of interdependent components

- Multi cloud and hybrid infrastructure

- Real time data pipelines

- AI inference services with dynamic workloads

- External API dependencies

In such environments, failures propagate across boundaries.

A small configuration drift in one service can create latency spikes downstream. A schema mismatch in a data pipeline can affect model predictions. A change in traffic routing can shift cost patterns and performance simultaneously. Monitoring tools surface isolated signals. They rarely show relationships between them. Without correlated telemetry, teams see symptoms instead of system behavior. (This is where data architecture defines how systems reveal behavior.)

Why Reliability Is Getting Harder in Distributed Systems

Distributed system adoption continues to expand. Industry DevOps reports show that a majority of mid to large scale organizations now operate service based architectures rather than monolithic stacks. At the same time, incident frequency tied to configuration changes and deployment errors remains significant.

Cloud infrastructure growth is accelerating alongside AI adoption. As organizations scale inference services and event driven pipelines, telemetry volume increases dramatically. Many teams report that while visibility tools exist, contextual insight is fragmented across separate dashboards.

This creates a measurable gap:

Systems are more observable in theory, yet harder to understand in practice. Monitoring coverage has increased. Operational clarity has not increased proportionally.

Observability Is a System Property, Not a Tool Category

True observability requires structural design decisions.

It includes:

- Distributed tracing that follows requests across services

- Structured logs aligned to business transactions

- Metrics tied to domain entities rather than only infrastructure

- Telemetry that correlates cost, performance, and workload behavior

- Contextual dashboards that connect signals instead of isolating them

This shifts operations from reactive alert response to behavioral understanding. In a monitored system, teams respond to incidents. In an observable system, teams detect degradation patterns before escalation.

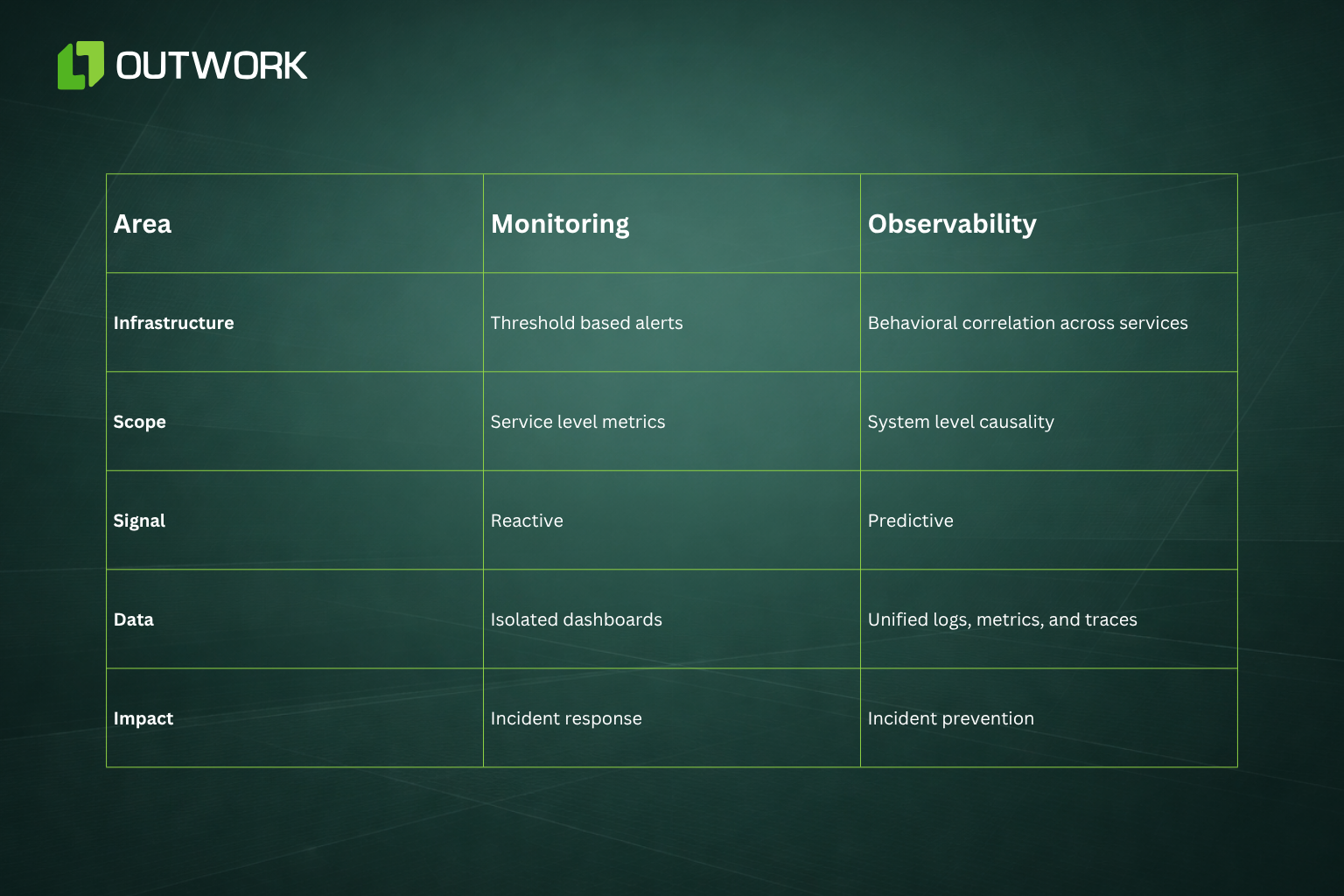

Monitoring vs Observability: A Practical Comparison

How Observability Reduces Operational Risk

When observability is embedded into architecture, the benefits extend beyond uptime.

- Mean Time to Resolution decreases because root cause is visible

- Customer impact is reduced because anomalies are detected earlier

- Cloud cost spikes can be traced to workload behavior rather than monthly invoices

- AI model drift can be identified before prediction quality degrades significantly

- Compliance investigations become faster because traceability is built in

Reliability in 2026 is no longer measured only by uptime percentages. It is measured by how often customers experience instability at all. Observability directly influences that outcome.

Operational Decisions That Change Reliability

If you lead platform, product, or engineering teams, the strategic question is not whether you have monitoring coverage. It is whether your systems are explainable under stress.

Practical decisions include:

- Require distributed tracing for all new services before production release.

- Tie telemetry to business entities such as customer, transaction, or workflow rather than only servers and containers.

- Connect cost telemetry with performance signals to detect inefficient workloads early.

- Include model drift detection and inference monitoring as part of AI production governance.

- Standardize observability patterns at the platform layer rather than leaving tooling decisions to individual teams.

Observability must be designed before complexity compounds. Retrofitting it later increases operational friction exponentially. (Learn how Systems built for speed rarely sustain clarity under real load.)

How Observability Prevents Incidents in Practice

The Future of Production Reliability

Monitoring ensures that systems are running. Observability ensures that teams understand how systems behave under pressure. As architectures grow more distributed and AI systems become embedded into production workflows, tolerance for blind spots shrinks.

Organizations that invest in observability as a core platform capability prevent incidents before they escalate. Organizations that rely solely on monitoring continue reacting to thresholds that are triggered too late.

Reliability is no longer defined by whether something breaks. It is defined by whether you saw it coming.

Outwork POV

At Outwork, observability is treated as an architectural foundation rather than a dashboard enhancement. We embed telemetry at the platform layer, align traces with business workflows, and ensure AI and data systems are observable before automation scales.

That is how teams move from alert fatigue to operational control.